Audio/Video Latency and its Relation to AV Sync

A history of audio and video latency from the engineering perspective of consumer electronics.

Latency is a term that is used by engineers to describe a delay between a request and response or input and output, such as the time incurred to complete processing or transmission of data.1, 2

Now-obsolete analog CRT televisions and monitors would have less than a microsecond of latency between when a video signal arrived at the display and when that signal could be seen as light on the display.3 Audio amplifiers would have a similarly low latency. In this era of consumer electronics, the audio or video latency of a TV, monitor, or audio amplifier was negligible in almost all cases.

Once digital display technologies such as LCD panels became widely used in TVs and monitors and digital audio standards such as audio over HDMI became prevalent, a delay caused by digital processing the input signal was introduced. This delay resulted in a much higher audio and video latency than analog devices of the past. A latency of greater than 100 milliseconds became common, even with digital devices that were given a digital input signal.4 This new technology, with higher audio and video latency, became problematic when real-time input from a user was represented as output on a digital display or amplifier. Some examples include computer usage, video games, and esports. Another problem relating to audio/video synchronization (AV sync) arose when a setup may have a separate audio system and television, each with different output latency.

June, 2006: HDMI “Auto Lipsync”

The issue of audio/video synchronization was enough of a concern that the June 2006 HDMI Specification 1.3 introduced a standard way for HDMI “sinks” (such as TVs, receivers, soundbars, repeaters, etc.) to report their audio and video latency, enabling a new HDMI feature called “Auto Lipsync Correction”.5 This latency information was communicated over EDID data for video and audio in progressive and interlaced modes.6 With this new standard a receiver could be connected to a TV, learn about the TV’s video latency, and delay its audio output by the same amount that the TV was delaying video output, thus synchronizing the audio and video output of the A/V system.

“The latency values within these fields indicate the amount of time between the video or audio entering the HDMI input to the actual presentation to the user (on a display or speakers), whether that presentation is performed by the device itself or by another device downstream.”

HDMI Specification 1.3, Section 8.9.1 “EDID Latency Info”

This definition of audio and video latency is useful for AV sync and can also be applied to many other consumer electronics A/V transport interfaces such as older analog signals or newer digital signals such DisplayPort. All of these interfaces enable a display or amplifier to present the audio or video signal as soon as possible. In the case of a video signal, this means presenting one pixel at a time as they arrive at the input rather than requiring a display to queue up all of the pixels of a video frame before presenting them. That said, these video specifications do not prohibit a display from queuing up pixels to display a frame all at once, so if this alternative method of display is used it means that the pixels at the beginning of the frame will have a higher video latency than the pixels at the end of the frame.

Limitations of EDID Latency Information

While this EDID information was a great first step in enabling this new “Auto Lip Sync” feature, the HDMI Specification recognized that it did not account for changes in audio or video latency introduced by changing the operation mode of the output device, such as changing your TV from “movie” to “game” mode or vise-versa.7

September, 2013: HDMI “Dynamic Auto Lipsync”

Recognizing that latency may vary greatly between operation modes of a device, the HDMI Specification 2.0 introduced a new “Dynamic Auto Lipsync” feature that used CEC messages to communicate changes in audio or video latency.8

November, 2017: HDMI 2.1 eARC

HDMI eARC introduced a new automatic AV sync error correction that allows an eARC transmitter, such as a TV, to request an audio latency of the eARC receiver. The eARC receiver will report its audio latency back to the eARC transmitter.

Input Lag

A history input lag measurements from the review & consumer perspective of consumer electronics.

As audio and video latency of consumer electronics suddenly became much higher with the introduction of digital audio and video processing, many reviewers and consumers noticed the impact on both AV sync and responsiveness of interactive applications. Because reviewers and consumers do not have access to engineering-grade test equipment such as high bandwidth oscilloscopes or logic/protocol analyzers, a number of alternative video latency measurement methods have been developed over the years that are both cheap and quick. Unfortunately, some of these methods had drawbacks in accuracy, precision, and usability.

From a user’s perspective, it is sometimes clear that there is a problematic unresponsiveness to an interactive system, but it is not clear where that unresponsiveness comes from. This phenomenon has been commonly described as “input lag”9. Although this input lag may be caused by a controller, a game system, or by the video latency of a display, it became common among users and reviewers to refer to the video latency of a display as the display’s input lag. Later, in 2012, input lag officially took on a new meaning that is different than that of video latency.

Early Measurement Methods

During the transition from CRT to LCD displays, it became common among users to use a “stopwatch method” to compare the video latency of their new LCD display to their old CRT.10 In 2009, Thomas Thiemann developed an improved stopwatch method, called SMTT, alongside a very detailed report on input lag published on prad.de. (English Google translation and Internet Archive of the original professional English translation.) Even more details are included in this interview with Thomas found on TFTCentral.

These early methods of measuring a display’s “input lag” were actually approximate measurements of video latency, rather than input lag.

In 2008, a new consumer-facing method of measuring video latency arrived with Harmonix’s Rock Band 2. This new version of the game used a Wireless Fender Stratocaster which had an optical sensor and microphone built in which could be used by the game to measure the audio and video latency of a TV. In the case of the Xbox 360 version of the game, a 0 ms latency could be measured when holding the sensor to the top of a CRT TV. If held at the center of a display, the reported value would represent the 2012 definition of input lag, rather than video latency.

In later versions of the game, such as Rock Band 4 for Xbox One, the reported measurements were no longer calibrated accurately and could not be used to measure the input lag or video latency of a display.

2012: ICDM Standard and Leo Bodnar Lag Tester

In 2012, two important events happened in the world of consumer electronics review: the International Committee for Display Metrology (ICDM) released the Information Display Measurements Standard (IDMS) and Leo Bodnar released their first “Video Signal Input Lag Tester“.

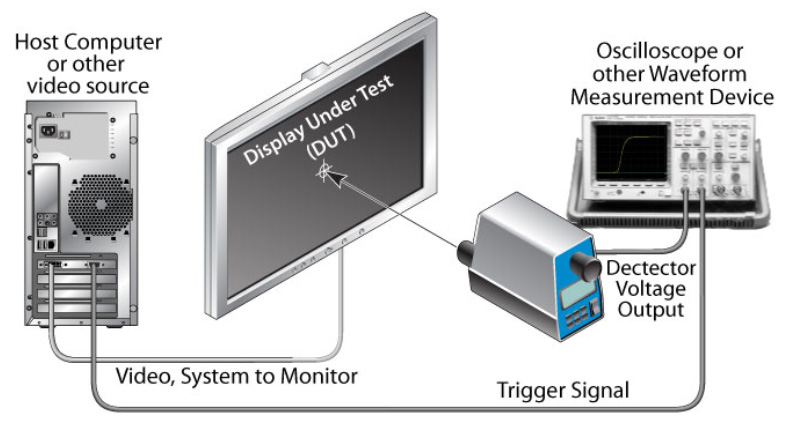

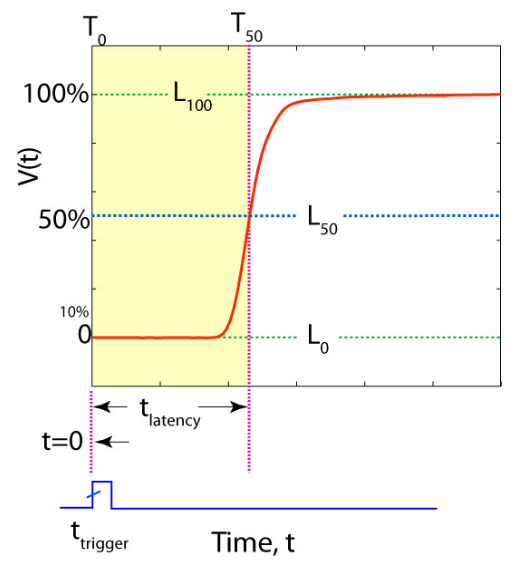

The Leo Bodnar lag tester is a conceptually simple device that provides a video signal with flashing white blocks at the top, middle, and bottom of the screen. It measures the time between the start of a video frame’s transport and the moment its light sensor detects a change in brightness. This means the device can measure input lag at the middle of the screen. Because of the known video timings of this tool, these input lag measurements can also be used to calculate the video latency at the middle of the screen.

Around the same time as the initial release of the Leo Bodnar lag tester, the ICMD published their method for measuring video latency at the center of the screen relative to “the time at which the change of state from black to white is to be initiated in the host system”.11, 12 This describes a measurement of input lag, which is not useful in audio/video synchronization (AV sync error), but is relatively simple to measure. It is relevant to note that this document is focused purely on properties of visual displays and has no mention of audio or audio/video synchronization. The ICDM recognized that this recommendation for the trigger signal may not be suitable for some applications:

- “Trigger Obtaining the trigger signal may require access to electrical signals inside the display or video source electronics. The selection of an appropriate signal is application specific.”

Using a trigger point of the time when pixel data for the center of the display arrives at the display’s input would enable measurement of video latency for the application of AV sync. The Video Latency section of the IDMS remains unchanged in version 1.1 which was released in July of 2021.

A number of publications including CNET, Sound & Vision, DisplayLag, HDTVtest, Reference Home Theater, and others began coordinating a consistent method for reporting “input lag” measurements between review sites.13 They settled on using the average of all three measurements, which is almost always equal to the measurement at the middle of the screen. This method matches the guidelines from the IDMS to measure video latency at the center of a screen, which has a number of benefits described in Advanced Topics in Video Latency and Audio/Video Synchronization. This method also follows the IDMS suggestion for a timer trigger at the start of frame transport, which results in half of a frame’s transport time being included in the measurement.

Last updated on March 6th, 2023.

- TechTerms, Latency Definition

- PCMag Encyclopedia, Latency

- Untersuchung des Testverfahrens einer Input-Lag-Messung, Die Messung des Input Lags bei einem CRT

- HDMI Specification 2.0, Section 10.6.2 “Compensation”

- HDMI Specification 1.3, Section 8.9 “Auto Lipsync Correction Feature”

- HDMI Specification 1.3, Section 8.9.1 “EDID Latency Info”

- HDMI Specification 1.3, Section 8.9.1.1 “Supporting a Range of Latency Values”

- HDMI Specification 2.0, Section 10.7 “Supporting Dynamic Latency Changes: Dynamic Auto Lipsync”

- Wikipedia, “Input lag”

- flatpanelshd, “Input Lag Test”

- IDMS 1.03, 10.3 Video Latency, Description

- IDMS 1.03, 10.3 Video Latency, Procedure

- Reference Home Theater, “Leo Bodnar Lag Tester Review, Comments – Chris Heinonen”